Blog

Leader Election and Configuration Files with Contour v0.15

Sep 5, 2019

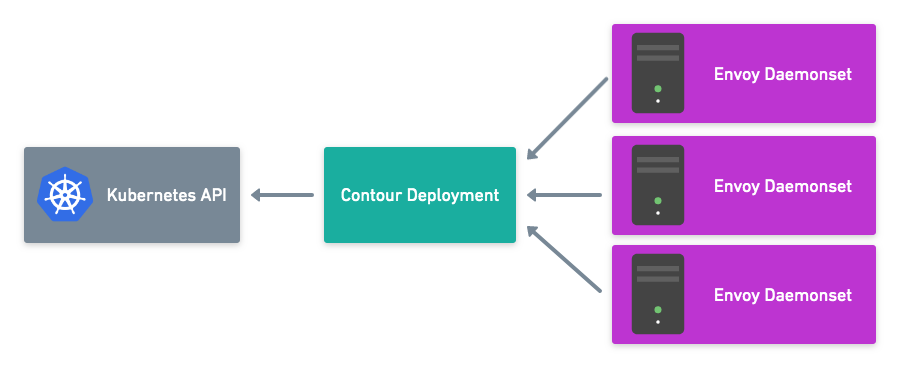

In the previous release of Contour, a split deployment model was improved to secure communication between Envoy and Contour. Now, with our latest release, Contour v0.15, leader election is available to ensure that all instances of Envoy take their configuration from a single Contour instance.

Leader Election

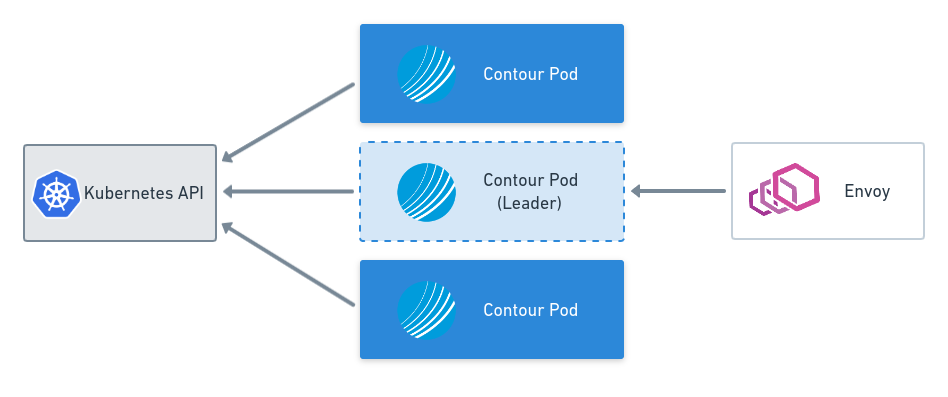

Each instance of Contour configures a connection to the Kubernetes API server in order to watch for changes to objects in the cluster. Contour is concerned with Services, Endpoints, Secrets, Ingress, and IngressRoute objects. Having multiple readers to the Kubernetes API is fine (and is implemented in many different components); however, since Contour updates the status of an IngressRoute object, multiple writers (that is, multiple instances of Contour) can cause issues when each one of them attempts to update the status. Additionally, it’s possible that each instance of Contour processes events from Kubernetes at a different time, causing different configurations to be passed to Envoy.

In leader election mode, only one Contour pod in a deployment, the leader, will open its gRPC endpoint to serve requests from Envoy. All other Contour instances will continue to watch the API server but will not serve gRPC requests. Leader election can be used to ensure that all instances of Envoy take their configuration from a single Contour instance.

Leader election is currently opt in. In future versions of Contour, we plan to make leader election mode the default.

For more information, please consult the documentation on upgrading.

Contour Configuration File

Contour has previously supported configuration options to be passed via command-line arguments to the Contour process. Changes to these parameters meant updating the deployment spec.

Here’s an example spec from a Kubernetes Deployment manifest:

containers:

- args:

- serve

- --incluster

- --enable-leader-election

- --xds-address=0.0.0.0

- --xds-port=8001

Now with v0.15, a configuration file can specify configurations that apply to each Contour installation. However, per-Ingress or per-Route configuration continues to be drawn from the objects and CRDs in the Kubernetes API server.

Sample configuration file:

apiVersion: v1

kind: ConfigMap

metadata:

name: contour

namespace: heptio-contour

data:

contour.yaml: |

# should contour expect to be running inside a k8s cluster

# incluster: true

#

# path to kubeconfig (if not running inside a k8s cluster)

# kubeconfig: /path/to/.kube/config

#

# disable ingressroute permitInsecure field

# disablePermitInsecure: false

tls:

# minimum TLS version that Contour will negotiate

# minimumProtocolVersion: "1.1"

# The following config shows the defaults for the leader election.

# leaderelection:

# configmap-name: contour

# configmap-namespace: leader-elect

More new features in Contour v0.15

Version 0.15 includes several fixes. It patches several CVEs related to HTTP/2 by upgrading Envoy to v1.11.1. To help with the number and frequency of configuration updates sent to Envoy, Contour now ignores unrelated Secrets and Services that are not referenced by an active Ingress or IngressRoute object.

We recommend reading the full release notes for Contour v0.15 as well as digging into the upgrade guide, which outlines some key changes to be aware of when moving from v0.14 to v0.15.

Future Plans

The Contour project is very community driven and the team would love to hear your feedback! Many features (including IngressRoute) were driven by users who needed a better way to solve their problems. We’re working hard to add features to Contour, especially in expanding how we approach routing.

If you are interested in contributing, a great place to start is to comment on one of the issues labeled with Help Wanted and work with the team on how to resolve them.

We’re immensely grateful for all the community contributions that help make Contour even better! For version v0.15, special thanks go out to:

Related Content

Secure xDS Server Communication with Contour v0.14

This blog post covers key features of the Contour v0.14.0 release including securing xDS communication with Envoy.

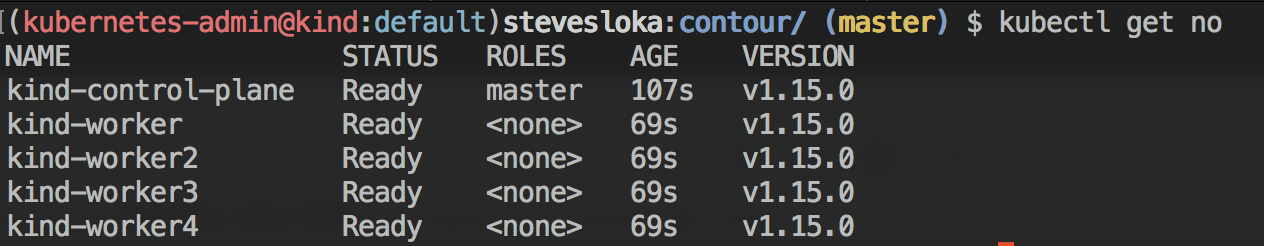

Kind-ly running Contour

This blog post demonstrates how to install kind, create a cluster, deploy Contour, and then deploy a sample application, all locally on your machine.

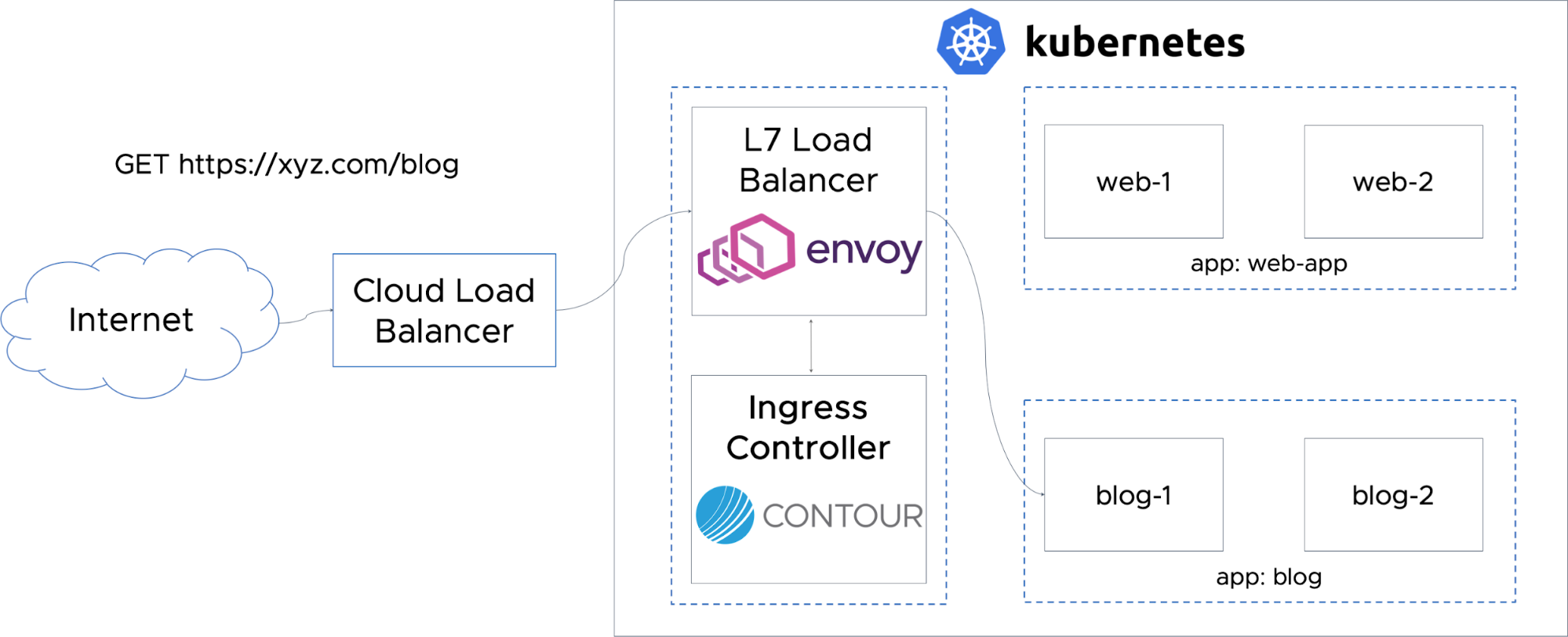

Routing Traffic to Applications in Kubernetes with Contour

One of the most critical needs in running workloads at scale with Kubernetes is efficient and smooth traffic ingress management at the Layer 7 level.

Twitter

Twitter Slack

Slack RSS

RSS GitHub

GitHub